Symbiotic Alignment via Collective Predictive Coding: A Theoretical Framework for Co-Creative Human-AI Ecosystems

We propose Symbiotic Alignment, where AI alignment emerges from human-AI interaction rather than top-down control, grounded in Collective Predictive Coding.

March 2026 | Tadahiro Taniguchi, Yusuke Hayashi, Momoha Hirose, Mizuki Oka, Ken Suzuki, Olaf Witkowski, Audrey Tang | Artificial Life (journal submission)

Summary

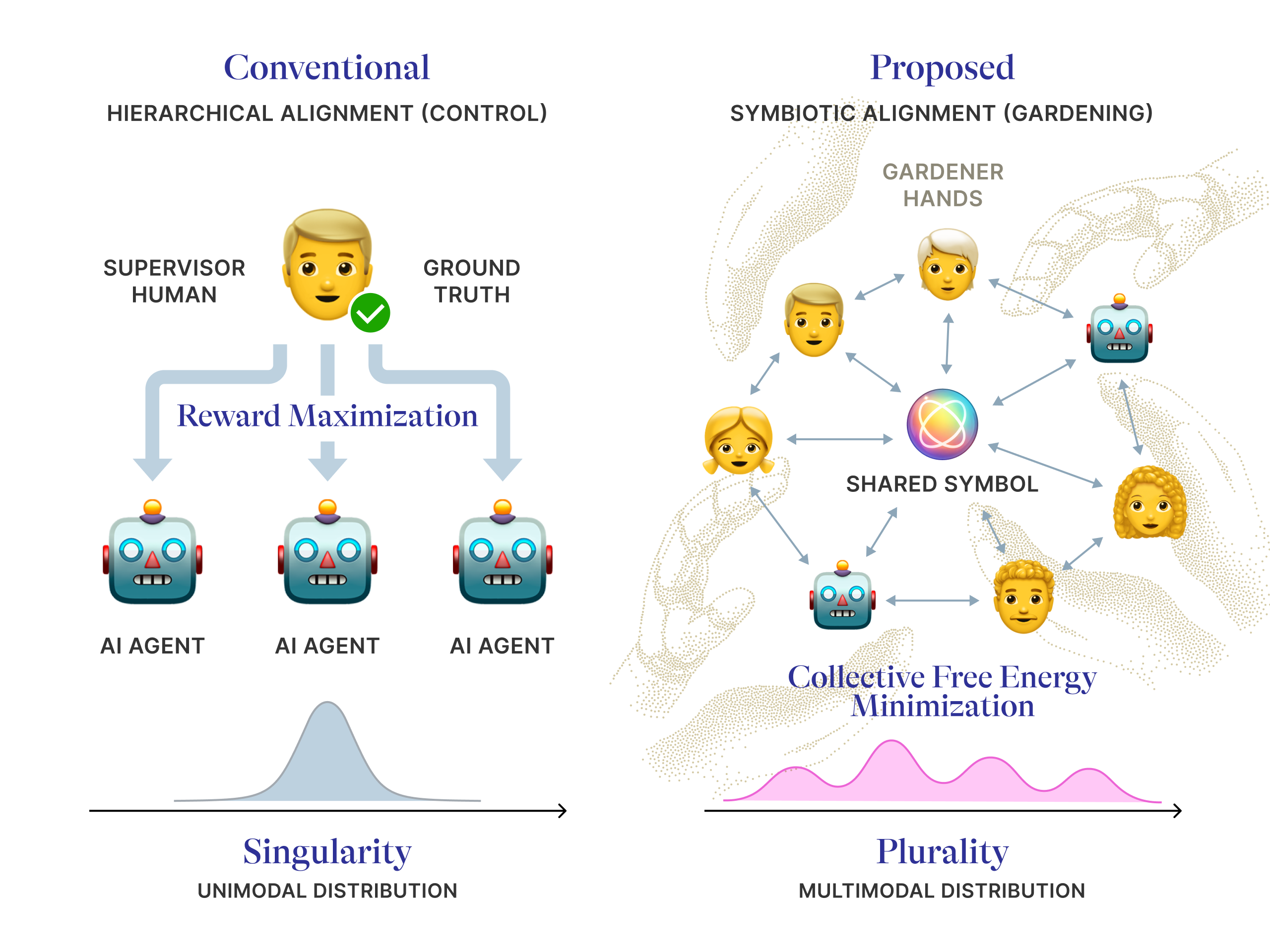

Today’s AI systems are aligned through control: a small group of developers defines what counts as “correct,” and models are trained to obey. But as AI reshapes how we communicate, make decisions, and form beliefs, this top-down approach is failing to address the emergent, society-wide risks it creates — from filter bubbles to democratic backsliding. We propose a fundamentally different paradigm: Symbiotic Alignment (SA), where alignment is not a constraint imposed from above but a coherence that emerges from the interaction of humans and AI agents negotiating shared meaning together.

We ground this vision in Collective Predictive Coding (CPC), a mathematical framework from Artificial Life research — the study of how life-like behaviors such as evolution, self-organization, and collective intelligence can emerge in biological and computational systems. CPC models how groups of agents — human or artificial — can collectively build a shared understanding of the world through decentralized communication, without any single agent holding privileged “ground truth.” The result is a formal bridge between AI alignment and the sociotechnical vision of plurality: a future where diverse worldviews coexist and dynamically harmonize, rather than being forced into a single mold.

Background

The dominant approach to AI alignment relies on Reinforcement Learning from Human Feedback (RLHF) and related methods. The core assumption is hierarchical: humans supervise, AI obeys. Yet this creates a critical bottleneck — whose values count as “ground truth”? In practice, the authority to define what AI considers “correct” is monopolized by a small number of developers and corporations. The result is not universal alignment but the quiet imposition of a particular worldview onto billions of users.

Meanwhile, AI-driven recommendation algorithms optimizing for individual engagement are accelerating social polarization. According to V-Dem Institute data, the share of the world’s population living in liberal democracies has dropped from 51% in 2004 to 28% in 2024. As Audrey Tang has observed, these systems maximize “polarization per minute” rather than fostering the plurality essential for societal health. The problem is not a bug in any single model — it is an emergent property of the entire human-AI ecosystem.

From Control to Co-Creation

SA reframes alignment as a process of mutual adaptation rather than unilateral control. Instead of training AI to match a fixed reward function, SA envisions humans and AI agents collectively negotiating shared symbols, norms, and culture through ongoing interaction.

The mathematical backbone is CPC, formalized as an extension of Multi-Agent Reinforcement Learning (MARL). The key insight is a single addition: a collective regularization term that gives every agent — human or AI — an intrinsic drive to build shared representations with others, not just pursue individual goals. While standard MARL decomposes neatly into each agent maximizing its own reward, CPC introduces a genuinely collective force: a shared gravitational pull toward mutual understanding — a tendency for agents to co-construct meaning together — that no single agent can produce alone.

This is how language, culture, and social norms have always emerged in human societies — from the bottom up, through interaction. CPC provides the computational substrate for extending this process to include AI.

Plurality Without Forced Consensus

A critical question arises: does collective coherence require everyone to agree? SA’s answer is no. Within the CPC framework, disagreement between communities is not only tolerated but expected — diverse worldviews coexist as distinct clusters within a shared belief landscape, each representing a locally coherent perspective. Rather than treating this as a failure to be corrected, SA treats it as epistemic information to be explored.

This has a concrete precedent. Polis, the open-source collective intelligence platform used by Taiwan’s vTaiwan initiative, surfaces areas of consensus across diverse stakeholders — not by forcing agreement, but by identifying “uncommon common ground” among otherwise polarized groups. Taiwan’s Ministry of Digital Affairs later extended this approach through Alignment Assemblies, collaborating with the Collective Intelligence Project, Anthropic, and OpenAI to invite citizens to co-create principles for AI development. Collective Constitutional AI used Polis to gather input from approximately 1,000 U.S. adults, producing a publicly derived constitution that reduced bias on social benchmarks while maintaining reasoning performance.

SA via CPC provides the theoretical foundation for why such approaches work — and how they could scale: decentralized agents, negotiating shared symbols through local interaction, can reach coherence without any central authority dictating terms.

Vision: Not Architects, but Gardeners

SA emerges from a tradition that has long studied how coherent order arises from the bottom up: Artificial Life research. The principles of emergence, self-organization, and complex adaptive systems that define this field are exactly what SA applies to the challenge of AI alignment — treating the human-AI network not as a machine to be controlled, but as a living ecosystem to be cultivated.

This is the core idea: we are not architects of AI alignment; we are gardeners. An architect imposes a fixed blueprint — deciding in advance exactly what the structure should look like. A gardener, by contrast, designs the conditions — soil, sunlight, spacing — and then lets the garden grow. In SA, those conditions are communication topologies, interaction protocols, and incentive structures, under which healthy collective dynamics can self-organize. Our paper, Symbiotic Alignment via Collective Predictive Coding, identifies three concrete design problems for this gardening agenda: building AI agents capable of co-creative learning (contributing to shared meaning, not just consuming it), designing AI as a connector of people (bridging fragmented communities rather than reinforcing filter bubbles), and creating governance mechanisms that steer the ecosystem away from pathological equilibria without coercive uniformity.

Redefining alignment not as fixed code but as a living order emerging from interaction — and preventing the monopoly of values by any single entity — may be the only path to preserving the shared reality that is the foundation of democracy. We invite the community to build this with us.